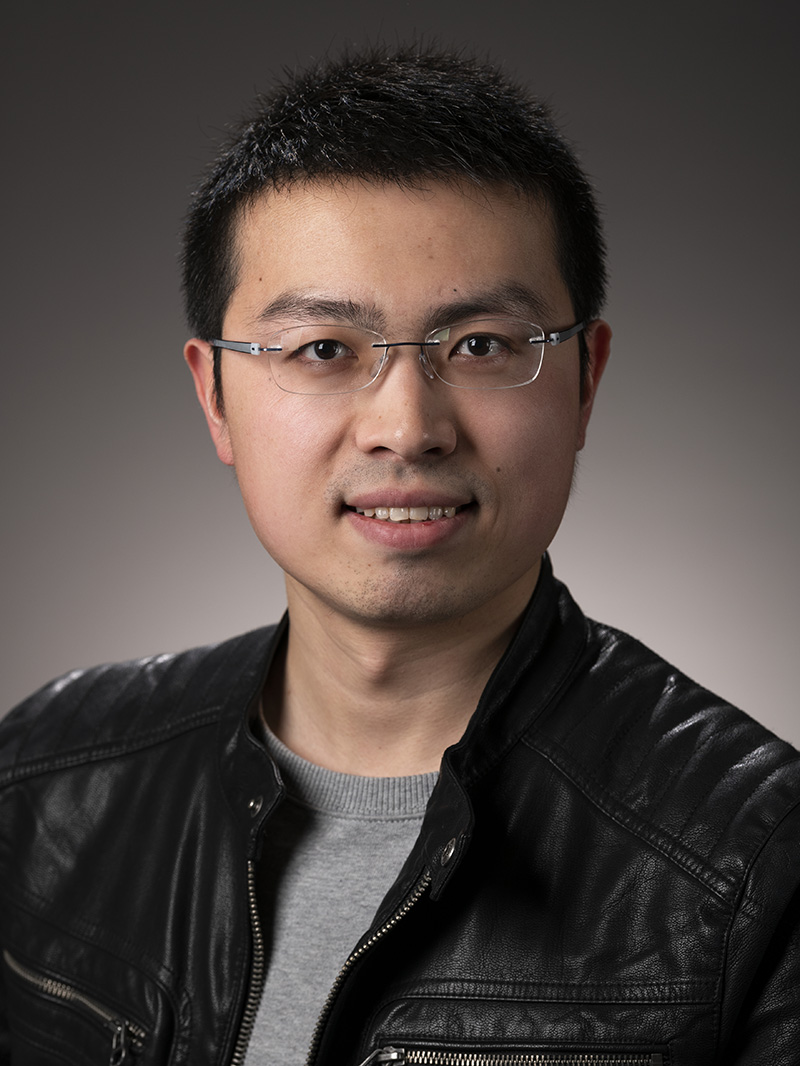

Lingqi Yan (闫令琪)

Assistant Professor

Department of Computer Science

University of California, Santa Barbara

2119 Harold Frank Hall

Santa Barbara, CA 93106

lingqi@cs.ucsb.edu

Curriculum Vitae [Oct 2023]

Latest News

- [Aug 2023] We have 5 papers accepted to SIGGRAPH Asia!

- [Jun 2023] Our paper A Practical and Hierarchical Yarn-based Shading Model for Cloth has won EGSR 2023 Best Paper Award and EGSR 2023 Best Visual Effects Award at the same time!

- [Jun 2023] I will be an associate editor of CVMJ!

- [Jun 2023] I will present a keynote at HPG 2023!

- [May 2023] I am starting to serve as a research consultant at Intel!

- [Apr 2023] We have 4 papers accepted to SIGGRAPH!

- [Apr 2023] I will serve as a member of the SIGGRAPH Asia 2023 Technical Papers Committee!

- [Jul 2022] Our paper Towards Practical Physical-Optics Rendering has won SIGGRAPH 2022 Technical Papers Awards: Best Paper Honorable Mention! show more / less...

- [May 2023] We have 2 papers accepted to EGSR!

- [Aug 2022] We have 4 papers accepted to SIGGRAPH Asia!

- [Jul 2022] I will serve as a member of the SIGGRAPH 2023 Technical Papers Committee!

- [Mar 2022] We have 4 papers accepted to SIGGRAPH 2022!

- [Dec 2021] Congrats Shlomi Steinberg on winning the NVIDIA Graduate Fellowship!

- [Nov 2021] We have 1 paper accepted to ToG!

- [Sep 2021] I will serve as a member of the SIGGRAPH 2022 Technical Papers Committee!

- [Aug 2021] We have 4 papers accepted to SIGGRAPH Asia 2021!

- [Apr 2021] We have 4 papers accepted to SIGGRAPH 2021!

- [Mar 2021] I am presenting a free online course "GAMES202: Real-time High Quality Rendering" in Chinese!

- [Feb 2021] We have 1 paper accepted to ToG, and one paper accepted to Eurographics 2021!

- [Aug 2020] We have 1 paper accepted to SIGGRAPH Asia 2020!

- [Feb 2020] I am presenting a free online course "GAMES101: Introduction to Modern Computer Graphics" in Chinese.

- [Dec 2019] Pradeep and I have co-hosted the 2nd SoCal Rendering Day in UC Santa Barbara!

- [Jul 2019] We have 2 papers accepted to SIGGRAPH Asia 2019.

- [May 2019] I am glad to receive the Outstanding Doctoral Dissertation Award at this year's SIGGRAPH!

- [May 2019] We have 1 paper accepted to EGSR 2019.

- [Mar 2019] We have 2 papers accepted to SIGGRAPH.

- [Dec 2018] I visited top universities and research labs (THU, PKU, USTC, ZJU, MSRA, NJUST, BUAA) in China and gave talks on next generation rendering.

- [Oct 2018] I attended SoCal Rendering Day at UCSD.

- [Jul 2018] I have joined UC Santa Barbara as an Assistant Professor!

- [Jun 2018] My Ph.D. dissertation is accepted by the administrators at UC Berkeley. Congrats Dr. Yan!

- [Apr 2018] I received C.V. Ramamoorthy Distinguished Research Award for "outstanding contributions to a new research area".

- [03/23/18] Our paper Rendering Specular Microgeometry with Wave Optics is accepted by SIGGRAPH 2018!

A Short Bio

I am an Assistant Professor of Computer Science at UC Santa Barbara, co-director of the MIRAGE Lab, and affiliated faculty in the Four Eyes Lab. Before joining UCSB, I received my Ph.D. degree from the Department of Electrical Engineering and Computer Sciences at UC Berkeley, advised remotely by Prof. Ravi Ramamoorthi at UC San Diego. During my Ph.D., I worked at Walt Disney Animation Studios (2014), Autodesk (2015), Weta Digital (2016) and NVIDIA Research (2017) as an intern. Earlier, I obtained my bachelor degree in Computer Science from Tsinghua University in China in 2013, advised by Prof. Shi-Min Hu and Prof. Kun Xu.

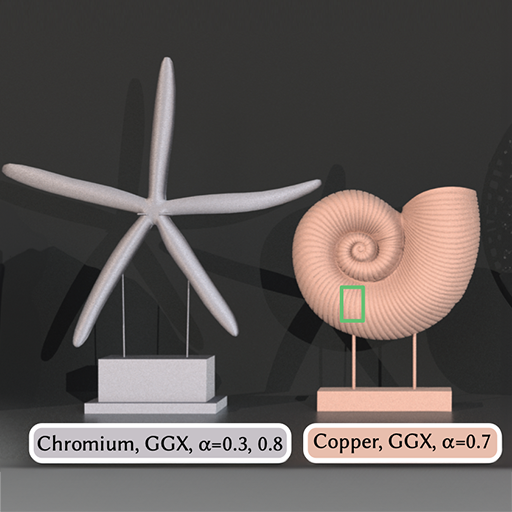

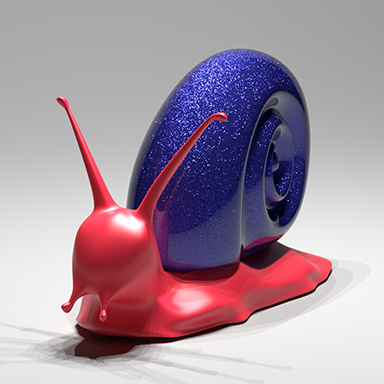

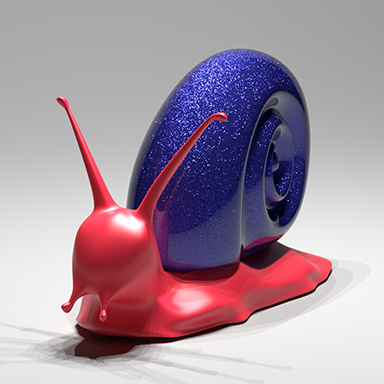

Research

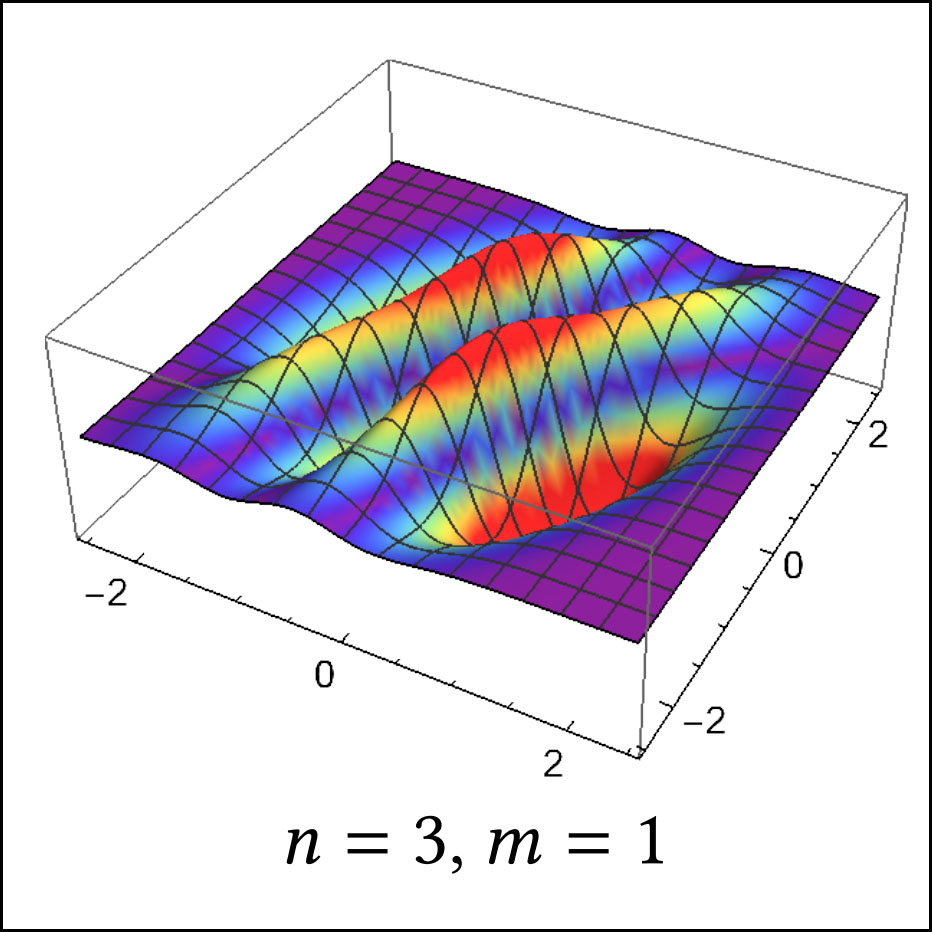

My research is in Computer Graphics. During my Ph.D. career, I mainly aimed at rendering photo-realistic visual appearance (a.k.a. hard-core graphics) at real world complexity, building theoretical foundations mathematically and physically to reveal the principles of the visual world. I have brought original research topics to Computer Graphics, such as detailed rendering from microstructure, and real-time ray tracing with reconstruction. And I took the first steps exploiting Machine Learning approaches for physically based rendering.

As a young Computer Graphics researcher, my dream is to present people an interactive computer-generated world to live in, just like the ones in the movies The Matrix and Ready Player One. To achieve that, I come up with my own Rendering Equation, as you can see above. I am prudently looking for outstanding graduate students (details and FAQs) to work on related research topics, including but not limited to physically-based / image-based rendering, real-time ray tracing and realistic appearance modeling / acquisition. Let's work together, not to change the world, but to create one.

I am fortunate and proud to work with these phenomenal students:

Current Postdoctoral Fellow: Junqiu Zhu

Current PhD Students: Zilin Xu, Songyin Wu, Zheng Zeng, Yang Zhou

Current MS Students: Chuyan Zhang, Tao Huang

Alumni: Lara Floegel-Shetty (MS, now at Apple), Shlomi Steinberg (PhD, now at U Waterloo), Jinglei Yang (MS, now at Amazon), Lei Xu (MS, now at Microsoft)

Teaching

| Term | Course | Location | Time |

|---|---|---|---|

| Spring 2024 | CS292F: Real-Time High Quality Rendering | Phelps 3526 | TuTh 11:00 AM - 12:00 PM (PT) |

| Winter 2024 | CS190I: Introduction to Offline Rendering | PSYCH 1924 | TuTh 9:30 AM - 10:45 AM (PT) |

| Fall 2023 | CS180/CS280: Introduction to Computer Graphics | IV THEA2 | TuTh 9:30 AM - 10:45 AM (PT) |

| Winter 2023 | CS292F: Real-Time High Quality Rendering | Phelps 3526 | MW 1:00 PM - 2:00 PM (PT) |

| Fall 2022 | CS180/CS280: Introduction to Computer Graphics | BUCHN 1920 | TuTh 9:30 AM - 10:45 AM (PT) |

| Spring 2022 | CS190I: Introduction to Offline Rendering | CHEM 1171 | MW 9:30 AM - 10:45 AM (PT) |

| Winter 2022 | CS291A: Real-Time High Quality Rendering | Phelps 2510 | MW 1:00 PM - 2:00 PM (PT) |

| Fall 2021 | CS180: Introduction to Computer Graphics | SH 1431 | TuTh 11:00 AM - 12:15 PM (PT) |

| Spring 2021 | CS291A: Real-Time High Quality Rendering | Online | Asynchronous Access |

| Spring 2021 | GAMES202: Real-Time High Quality Rendering (in Chinese) | Online | TuSa 10:00 AM - 11:00 AM (GMT+8) |

| Winter 2021 | CS180/CS280: Introduction to Computer Graphics | Online | Asynchronous Access |

| Fall 2020 | CS190I: Introduction to Offline Rendering | Online | Asynchronous Access |

| Spring 2020 | CS291A: Real-Time High Quality Rendering | Online | MW 5:00 PM - 6:15 PM (PT) |

| Spring 2020 | GAMES101: Introduction to Computer Graphics (in Chinese) | Online | TuF 10:00 AM - 11:00 AM (GMT+8) |

| Winter 2020 | CS180: Introduction to Computer Graphics | Phelps 2516 | TuTh 11:00 AM - 12:15 PM (PT) |

| Spring 2019 | CS180: Introduction to Computer Graphics | Girvetz 2128 | MW 11:00 AM - 12:15 PM (PT) |

| Winter 2019 | CS291A: Real-Time High Quality Rendering | Phelps 3526 | MW 3:30 PM - 5:30 PM (PT) |

Publications / Technical Reports* (+4 to appear)

ExtraSS: A Framework for Joint Spatial Super Sampling and Frame Extrapolation

Songyin Wu, Sungye Kim, Zheng Zeng, Deepak Vembar, Sangeeta Jha, Anton Kaplanyan, Ling-Qi Yan

ACM SIGGRAPH Asia 2023 (Conference Track)

Multiple-bounce Smith Microfacet BRDFs using the Invariance Principle

Yuang Cui, Gaole Pan, Jian Yang, Lei Zhang, Ling-Qi Yan, Beibei Wang

ACM SIGGRAPH Asia 2023 (Conference Track)

Extended Path Space Manifolds for Physically Based Differentiable Rendering

Jiankai Xing, Xuejun Hu, Fujun Luan, Ling-Qi Yan, Kun Xu

ACM SIGGRAPH Asia 2023 (Conference Track)

Manifold Path Guiding for Importance Sampling Specular Chains

Zhimin Fan, Pengpei Hong, Jie Guo, Changqing Zou, Yanwen Guo, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2023)

MetaLayer: A Meta-learned BSDF Model for Layered Materials

Jie Guo, Zeru Li, Xueyan He, Beibei Wang, Wenbin Li, Yanwen Guo, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2023)

Ray-aligned Occupancy Map Array for Fast Approximate Ray Tracing

Zheng Zeng, Zilin Xu, Lifan Wu, Lu Wang, Ling-Qi Yan

Computer Graphics Forum (Proceedings of Eurographics Symposium on Rendering 2023)

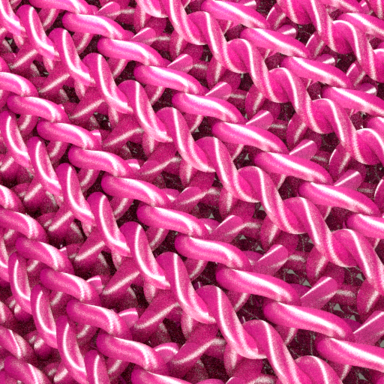

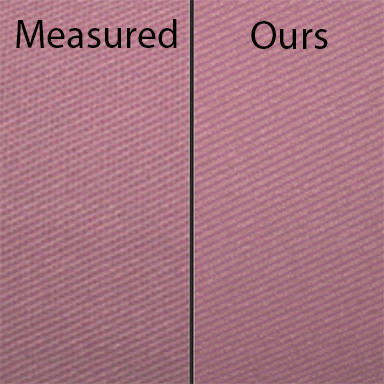

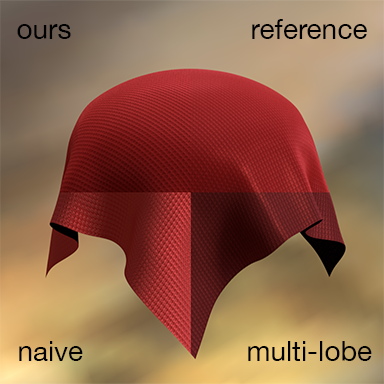

A Practical and Hierarchical Yarn-based Shading Model for Cloth

Junqiu Zhu, Zahra Montazeri, Jean-Marie Aubry, Ling-Qi Yan, Andrea Weidlich

Computer Graphics Forum (Proceedings of Eurographics Symposium on Rendering 2023)

EGSR 2023 Best Paper Award and EGSR 2023 Best Visual Award

On the Properties of the Anisotropic Multivariate Hermite-Gauss Functions

Shlomi Steinberg, Ömer Eğecioğlu, Ling-Qi Yan

Hacettepe Journal of Mathematics and Statistics

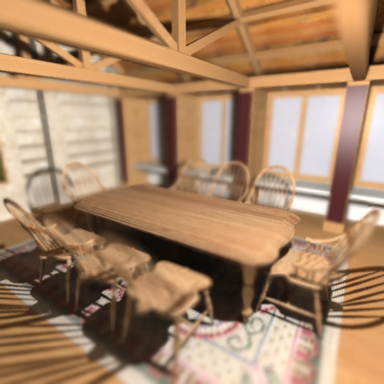

A Realistic Surface-based Cloth Rendering Model

Junqiu Zhu, Adrian Jarabo, Carlos Aliaga, Ling-Qi Yan, Matt Jen-Yuan Chiang

ACM SIGGRAPH 2023 (Conference Track)

Neural Prefiltering for Correlation-aware Levels of Detail

Philippe Weier, Tobias Zirr, Anton Kaplanyan, Ling-Qi Yan, Philipp Slusallek

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2023)

Neural Biplane Representation for BTF Rendering and Acquisition

Jiahui Fan, Beibei Wang, Miloš Hašan, Jian Yang, Ling-Qi Yan

ACM SIGGRAPH 2023 (Conference Track)

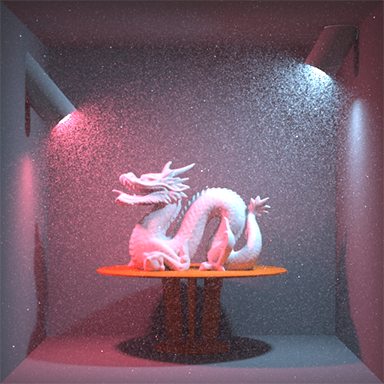

Deep Real-time Volumetric Rendering Using Multi-feature Fusion

Jinkai Hu, Chengzhong Yu, Hongli Liu, Ling-Qi Yan, Yiqian Wu, Xiaogang Jin

ACM SIGGRAPH 2023 (Conference Track)

Lightweight Neural Basis Functions for All-Frequency Shading

Zilin Xu, Zheng Zeng, Lifan Wu, Lu Wang, Ling-Qi Yan

ACM SIGGRAPH Asia 2022 (Conference Track)

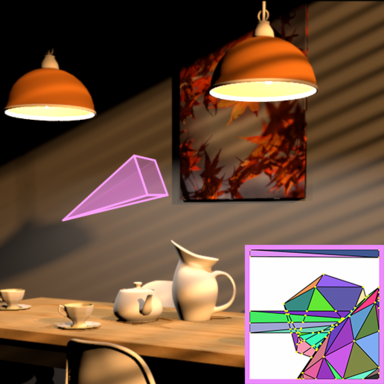

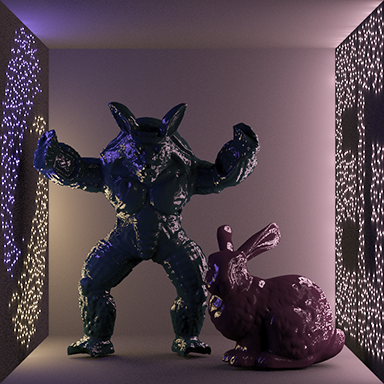

Unbiased Caustics Rendering Guided by Representative Specular Paths

He Li, Beibei Wang, Changehe Tu, Kun Xu, Nicolas Holzschuch, Ling-Qi Yan

ACM SIGGRAPH Asia 2022 (Conference Track)

Woven Fabric Capture from a Single Photo

Wenhua Jin, Beibei Wang, Miloš Hašan, Yu Guo, Steve Marschner, Ling-Qi Yan

ACM SIGGRAPH Asia 2022 (Conference Track)

Differentiable rendering using RGBXY derivatives and optimal transport

Jiankai Xing, Fujun Luan, Ling-Qi Yan, Xuejun Hu, Houde Qian, Kun Xu

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2022)

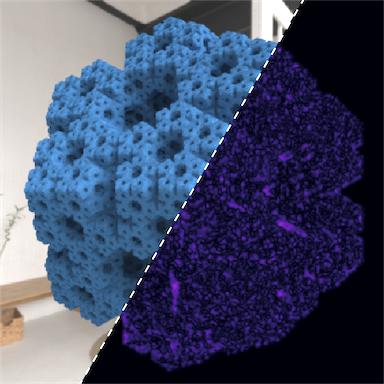

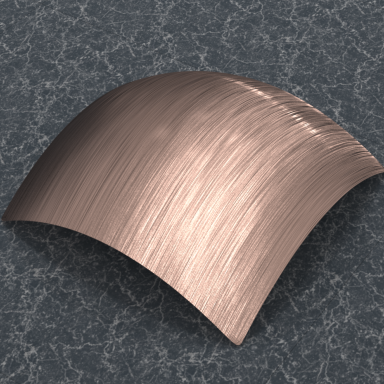

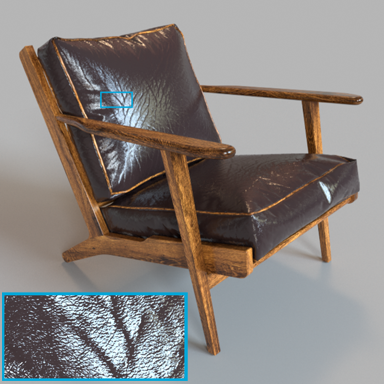

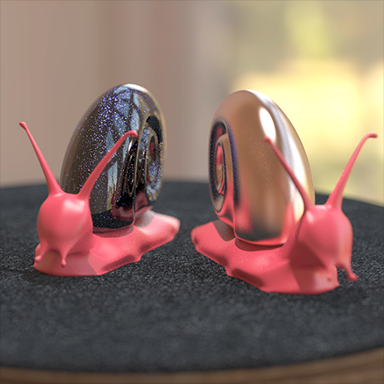

SpongeCake: A Layered Microflake Surface Appearance Model

Beibei Wang, Wenhua Jin, Miloš Hašan, Ling-Qi Yan

ACM Transactions on Graphics, 2022

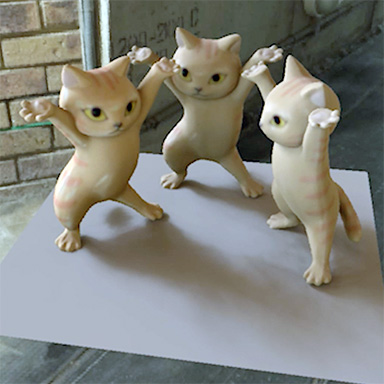

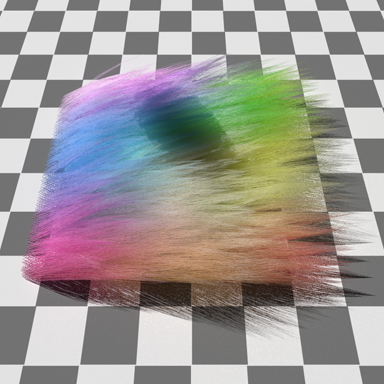

Practical Level-of-detail Aggregation of Fur Appearance

Junqiu Zhu, Sizhe Zhao, Lu Wang, Yanning Xu, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2022)

Position-free Multiple-bounce Computations for Smith Microfacet BSDFs

Beibei Wang, Wenhua Jin, Jiahui Fan, Jian Yang, Nicolas Holzschuch, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2022)

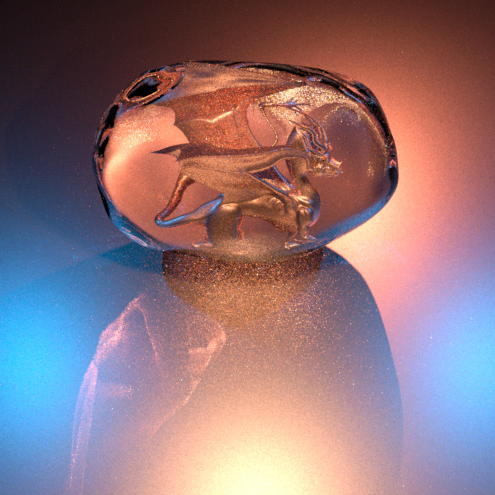

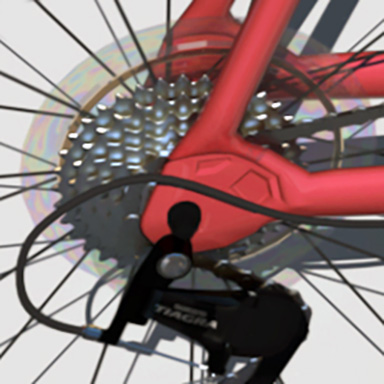

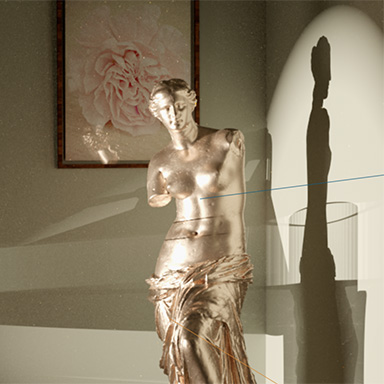

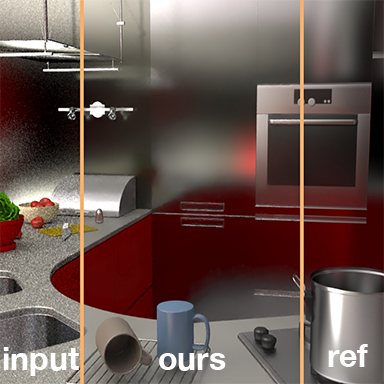

Towards Practical Physical-Optics Rendering

Shlomi Steinberg, Pradeep Sen, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2022)

SIGGRAPH 2022 Technical Papers Awards: Best Paper Honorable Mention

Neural Layered BRDFs

Jiahui Fan, Beibei Wang, Miloš Hašan, Jian Yang, Ling-Qi Yan

ACM SIGGRAPH 2022 (Conference Track)

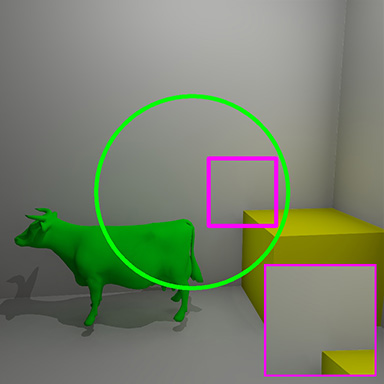

Recent Advances in Glinty Appearance Rendering

Junqiu Zhu, Sizhe Zhao, Yanning Xu, Xiangxu Meng, Lu Wang, Ling-Qi Yan

Computational Visual Media, 2022

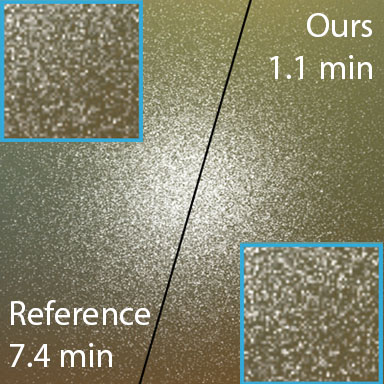

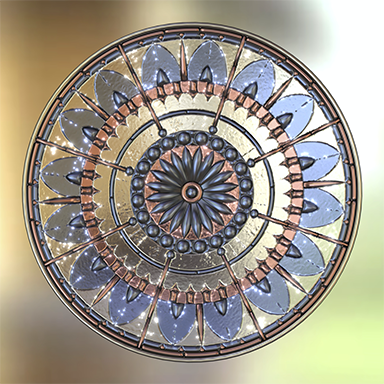

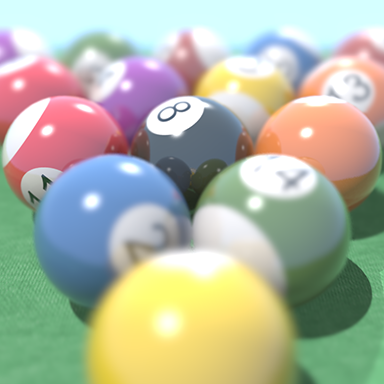

Efficient Specular Glints Rendering with Differentiable Regularization

Jiahui Fan, Beibei Wang, Wenshi Wu, Miloš Hašan, Jian Yang, Ling-Qi Yan

IEEE Transactions on Visualization and Computer Graphics, 2022

Real-Time Microstructure Rendering with MIP-mapped Normal Map Samples

Haowen Tan, Junqiu Zhu, Yanning Xu, Xiangxu Meng, Lu Wang, Ling-Qi Yan

Computer Graphics Forum, 2022

Constant-Cost Spatio-Angular Prefiltering of Glinty Appearance Using Tensor Decomposition

Hong Deng, Yang Liu, Beibei Wang, Jian Yang, Lei Ma, Nicolas Holzschuch, Ling-Qi Yan

ACM Transactions on Graphics, 2022

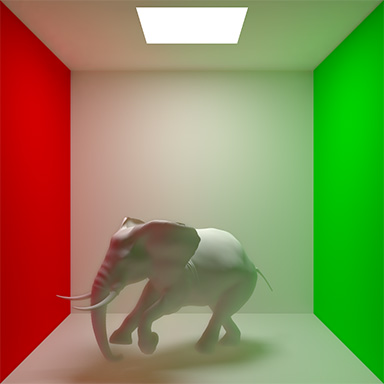

Physical Light-Matter Interaction in Hermite-Gauss Space

Shlomi Steinberg, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2021)

ExtraNet: Real-time Extrapolated Rendering for Low-latency Temporal Supersampling

Jie Guo, Xihao Fu, Liqiang Lin, Hengjun Ma, Yanwen Guo, Shiqiu (Edward) Liu, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2021)

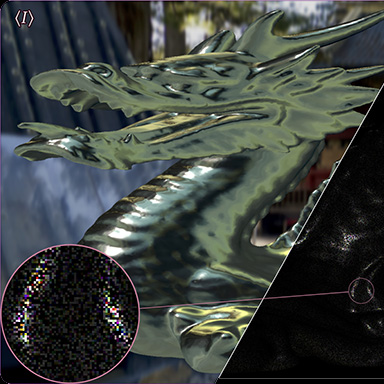

Ensemble Denoising for Monte Carlo Renderings

Shaokun Zheng, Fengshi Zheng, Kun Xu, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2021)

Fast and Accurate Spherical Harmonics Products

Hanggao Xin, Zhiqian Zhou, Di An, Ling-Qi Yan, Kun Xu, Shi-Min Hu, Shing-Tung Yau

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2021)

A Survey on Homogeneous Participating Media Rendering

Wenshi Wu, Beibei Wang, Ling-Qi Yan

Computational Visual Media, 2021

Temporally Reliable Motion Vectors for Better Use of Temporal Information

Zheng Zeng, Shiqiu (Edward) Liu, Jinglei Yang, Lu Wang, Ling-Qi Yan

Ray Tracing Gems II: Chapter 25

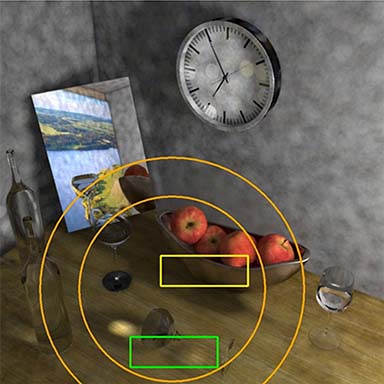

Foveated Photon Mapping

Xuehuai Shi, Lili Wang, Xiaoheng Wei, Ling-Qi Yan

IEEE Transactions on Visualization and Computer Graphics, 2021

Rendering of Subjective Speckle Formed by Rough Statistical Surfaces

Shlomi Steinberg, Ling-Qi Yan

ACM Transactions on Graphics, 2021

A Generic Framework for Physical Light Transport

Shlomi Steinberg, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2021)

Neural Complex Luminaires: Representation and Rendering

Junqiu Zhu, Yaoyi Bai, Zilin Xu, Steve Bako, Edgar Velázquez-Armendáriz, Lu Wang, Pradeep Sen, Miloš Hašan, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2021)

Volumetric Appearance Stylization With Stylizing Kernel Prediction Network

Jie Guo, Mengtian Li, Zijing Zong, Yuntao Liu, Jingwu He, Yanwen Guo, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2021)

Highlight-Aware Two-Stream Network for Single-Image SVBRDF Acquisition

Jie Guo, Shuichang Lai, Chengzhi Tao, Yuelong Cai, Lei Wang, Yanwen Guo, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2021)

Vectorization for Fast, Analytic, and Differentiable Visibility

Yang Zhou, Lifan Wu, Ravi Ramamoorthi, Ling-Qi Yan

ACM Transactions on Graphics, 2021

Temporally Reliable Motion Vectors for Real-time Ray Tracing

Zheng Zeng, Shiqiu (Edward) Liu, Jinglei Yang, Lu Wang, Ling-Qi Yan

Computer Graphics Forum (Proceedings of Eurographics 2021)

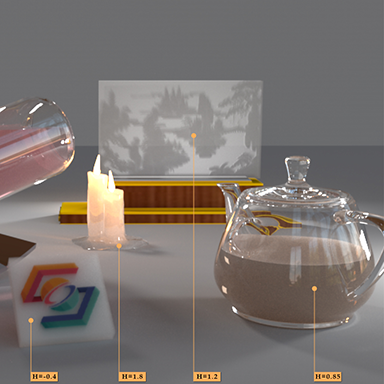

A Bayesian Inference Framework for Procedural Material Parameter Estimation

Yu Guo, Miloš Hašan, Ling-Qi Yan, Shuang Zhao

Computer Graphics Forum (Proceedings of Pacific Graphics 2020)

Path-based Monte Carlo Denoising Using a Three-Scale Neural Network

Weiheng Lin, Beibei Wang, Jian Yang, Lu Wang, Ling-Qi Yan

Computer Graphics Forum, Dec 2020

Path Cuts: Efficient Rendering of Pure Specular Light Transport

Beibei Wang, Miloš Hašan, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2020)

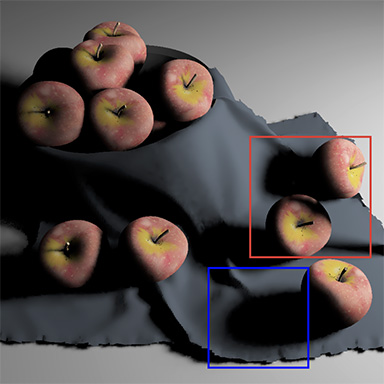

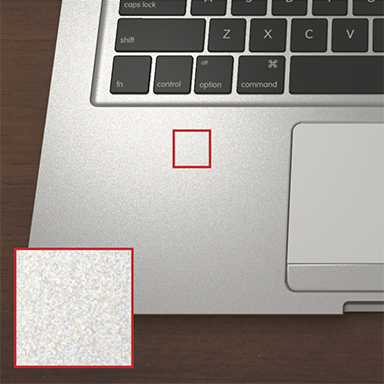

Example-Based Microstructure Rendering with Constant Storage

Beibei Wang, Miloš Hašan, Nicolas Holzschuch, Ling-Qi Yan

ACM Transactions on Graphics, 2020

Learning Generative Models for Rendering Specular Microgeometry

Alexandr Kuznetsov, Miloš Hašan, Zexiang Xu, Ling-Qi Yan, Bruce Walter, Nima Khademi Kalantari, Steve Marschner, Ravi Ramamoorthi

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2019)

GradNet: Unsupervised Deep Screened Poisson Reconstruction for Gradient-Domain Rendering

Jie Guo, Mengtian Li, Quewei Li, Yuting Qiang, Bingyang Hu, Yanwen Guo, Ling-Qi Yan

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2019)

Fractional Gaussian Fields for Modeling and Rendering of Spatially-Correlated Media

Jie Guo, Yanjun Chen, Bingyang Hu, Ling-Qi Yan, Yanwen Guo, Yuntao Liu

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2019)

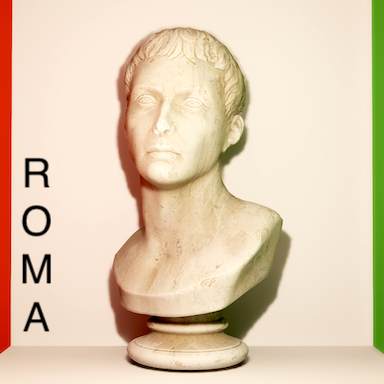

Accurate Appearance Preserving Prefiltering for Rendering Displacement-Mapped Surfaces

Lifan Wu, Shuang Zhao, Ling-Qi Yan, Ravi Ramamoorthi

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2019)

Rendering Specular Microgeometry with Wave Optics

Ling-Qi Yan, Miloš Hašan, Bruce Walter, Steve Marschner, Ravi Ramamoorthi

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2018)

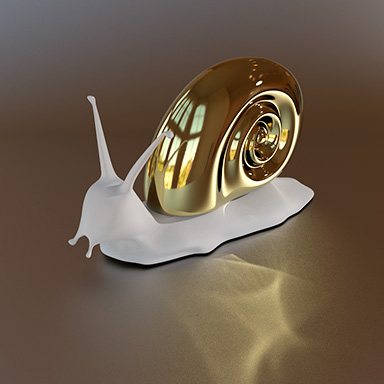

A BSSRDF Model for Efficient Rendering of Fur with Global Illumination

Ling-Qi Yan, Weilun Sun, Henrik Wann Jensen, Ravi Ramamoorthi

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2017)

Multiple Axis-Aligned Filters for Rendering of Combined Distribution Effects

Lifan Wu, Ling-Qi Yan, Alexandr Kuznetsov, Ravi Ramamoorthi

Proceedings of the Eurographics Symposium on Rendering, 2017

An Efficient and Practical Near and Far Field Fur Reflectance Model

Ling-Qi Yan, Henrik Wann Jensen, Ravi Ramamoorthi

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2017)

Antialiasing Complex Global Illumination Effects in Path-space

Laurent Belcour, Ling-Qi Yan, Ravi Ramamoorthi, Derek Nowrouzezahrai

ACM Transactions on Graphics, 2016

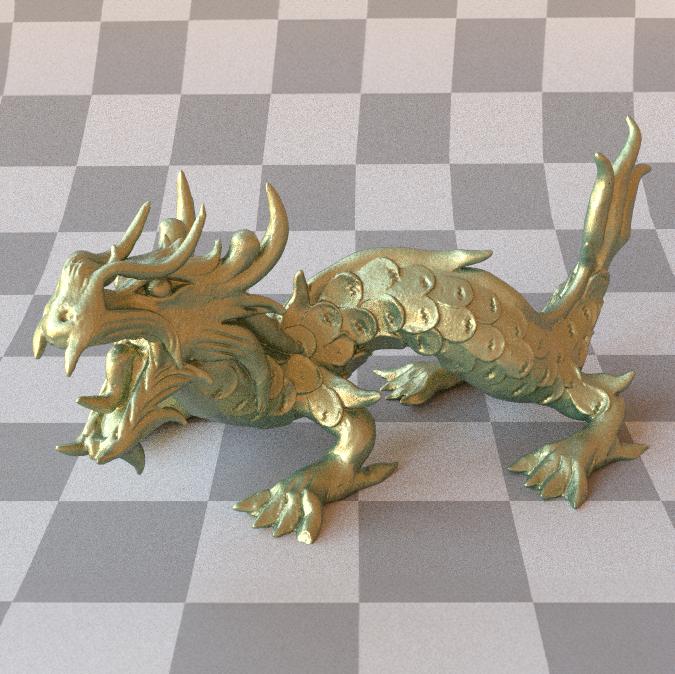

Position-Normal Distributions for Efficient Rendering of Specular Microstructure

Ling-Qi Yan, Miloš Hašan, Steve Marschner, Ravi Ramamoorthi

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2016)

Physically-Accurate Fur Reflectance: Modeling, Measurement and Rendering

Ling-Qi Yan, Chi-Wei Tseng, Henrik Wann Jensen, Ravi Ramamoorthi

ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2015)

Fast 4D Sheared Filtering for Interactive Rendering of Distribution Effects

Ling-Qi Yan, Soham Uday Mehta, Ravi Ramamoorthi, Fredo Durand

ACM Transactions on Graphics, 2015

Rendering Glints on High-Resolution Normal-Mapped Specular Surfaces

Ling-Qi Yan*, Miloš Hašan*, Wenzel Jakob, Jason Lawrence, Steve Marschner, Ravi Ramamoorthi

(*: dual first authors)

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2014)

Discrete Stochastic Microfacet Models

Wenzel Jakob, Miloš Hašan, Ling-Qi Yan, Jason Lawrence, Ravi Ramamoorthi, Steve Marschner

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2014)

Ph.D. Dissertation

Physically-based Modeling and Rendering of Complex Visual Appearance

Lingqi Yan, Advised by Ravi Ramamoorthi (Summer 2018)

Doctor of Philosophy in Computer Science in the

Graduate Division of the University of California, Berkeley

2019 ACM SIGGRAPH Outstanding Doctoral Dissertation Award

Misc

I am a huge fan of video games. In fact, this is the reason why I made up my mind to take rendering and Computer Graphics as my lifelong career when I was in primary school. We had a Hearthstone team at UC Berkeley and we made to the playoffs in the TeSPA Hearthstone Collegiate National Championship. I used to play PUBG but recently our team (FYI, SoEasy) has shifted interest towards Apex Legends, and we hit Platinum in Season 2 and Season 6. Here is a screenshot of chicken dinner we had recently.

I play piano a little bit, but classic only (with a National Piano Certificate of Level 10 in China, topmost for non-professional amateurs). I'm especially fond of Chopin's. Here are some short recordings of my home performance (F. Chopin: Waltz Op. 64. No. 2 in C-Sharp Minor, Nocturne Op. 9 No. 1 in B-Flat Minor and Op. 9 No. 2 in E-Flat Major). I'll upload more as my website continues to update.

My legal name spelling should be Lingqi Yan, and I only use Ling-Qi Yan for publications (due to some lab traditions at Tsinghua University). My name is pronounced as Ling--Chi--Yen, and here are some funny mistakes about how people usually call me (and I like them all).

"Lingqi Yan, first of his name, the unrejected, author of seven papers, breaker of the record, and the chicken eater." -- Born to be Legendary, by Lifan Wu @ UCSD.